The Linera Manual

Welcome to the reference manual of Linera, the decentralized protocol for real-time Web3 applications.

This documentation is split into two main parts:

-

The first section gives a high-level overview of the protocol.

-

The second section is intended for developers building applications using the Linera Rust SDK.

If you operate a Linera validator, the Node Operators section points to a dedicated site at https://docs.infra.linera.net/, with deployment artifacts (Helm charts, Docker Compose stack, deploy scripts) at https://github.com/linera-io/linera-artifacts.

NEW: Publish and test your Web3 application on the Linera Testnet!

Install the Linera CLI tool then follow the instructions on this page to claim a microchain and publish your first application on the current Testnet.

To join our community and get involved in the development of the Linera ecosystem, check out our GitHub repository, our Website, and find us on social media channels such as YouTube, X, Telegram, and Discord.

You can also find high-level introductory and extended concepts discussed and explained in video format in our Developer Workshops series on YouTube.

Let's get started!

Overview

Linera is a decentralized protocol optimized for real-time, agentic Web3 applications that require guaranteed performance for an unlimited number of active users.

The core idea of the Linera protocol is to run many chains of blocks, called microchains, in parallel in one set of validators.

How do microchains work?

Linera users propose blocks directly to the chains that they own. Chains may also be shared with other users. Linera validators ensure that all blocks are validated and finalized in the same way across all the chains.

flowchart LR

user(["User wallet"])

provider(["Provider"])

validators(["Validators"])

subgraph "Validators"

chain1["Personal chain"]

chain2["Temporary chain"]

chain3["Public chain"]

chain4["App chain"]

end

user -- owns --> chain1

user -- shares --> chain2

validators -- own --> chain3

provider -- owns/shares --> chain4

%% Styling

style Validators fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style user fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style provider fill:#A0736B,stroke:#D2E8C8,stroke-width:2px

style chain1 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain2 fill:#5A8269,stroke:#D2E8C8,stroke-width:2px

style chain3 fill:#4A5A60,stroke:#F3EEE2,stroke-width:2px

style chain4 fill:#4A7B75,stroke:#70D4D3,stroke-width:2px

While validation rules and security assumptions are the same for all chains, block production in each chain can be configured in a number of ways. In practice, most chains fall into the following categories:

- Personal chains (aka. user chains”) are those with a single owner, i.e. a single user proposing blocks.

- Temporary chains are shared between a few users.

- Public chains, usually dedicated to a particular task in the Linera infrastructure, are fully managed by Linera validators.

- Chains dedicated to a particular application, called app chains, may use either their own infrastructure for block production, a permissionless solution using proof-of-work, or a rotating set of trusted providers.

In order to validate all the chains reliably and at minimal cost, Linera validators are designed to be elastic, meaning that they can independently add or remove computational power (e.g. cloud workers) on demand whenever needed. In turn, this allows Linera applications to scale horizontally by distributing work to the microchains of their users.

What makes Linera real-time and agent-friendly?

Connected clients

To propose blocks and provide APIs to frontends, Linera users rely on a Linera client. Clients synchronize on-chain data in real-time, without trusting third parties, thanks to local VMs and Linera’s native support for notifications. Clients are sparse in the sense that they track only the chains relevant to a particular user.

flowchart LR

subgraph user_device["User device"]

ui["Web UI or AI agent"]

ui <-- GraphQL --> user_local

subgraph linera_client["Linera client"]

user_local["user chain"]

admin_local["admin chain"]

end

end

user_local <-- sync blocks --> user_remote

user_remote -- notify incoming messages --> user_local

admin_local <-- sync blocks --> admin_remote

subgraph validators_clients["Validators"]

user_remote["user chain"]

admin_remote["admin chain"]

end

%% Styling

style user_device fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style linera_client fill:#0e2630,stroke:#A0E3E2,stroke-width:1px,stroke-dasharray:2 2,rx:8,ry:8

style validators_clients fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style ui fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style user_local fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style admin_local fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style user_remote fill:#4A5A60,stroke:#F3EEE2,stroke-width:2px

style admin_remote fill:#4A5A60,stroke:#F3EEE2,stroke-width:2px

User interfaces interact with Linera applications by querying and sending high-level commands to local GraphQL services running securely inside the Linera client.

Similarly, discussions between AI agents and Linera applications stay local, hence private and free of charge. This also protects agents against compromised external RPC services.

Linera is the first Layer-1 to allow trustless real-time synchronization of user data on their devices, democratizing low-latency data access and bringing professional-grade security to frontends, customized oracle networks, and AI-trading agents.

Geographic sharding

In the future, Linera validators will be incentivized to operate machines and maintain a presence in a number of key regions. Most microchains will be pinned explicitly to a specific region, giving users of this region the lowest latency possible in their on-chain interactions. Linera validators will be incentivized to connect their regional data-centers using a low-latency network.

flowchart LR

subgraph region_1["Region 1"]

user1(["User 1"])

chain1["chain 1"]

chain2["chain 2"]

end

subgraph region_2["Region 2"]

chain3["chain 3"]

chain4["chain 4"]

end

user1 --> chain1

chain1 <--> chain2

chain2 <---> chain3

chain2 <---> chain3

chain3 <--> chain4

%% Styling

style region_1 fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style region_2 fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style user1 fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style chain1 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain2 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain3 fill:#4A5A60,stroke:#F3EEE2,stroke-width:2px

style chain4 fill:#4A5A60,stroke:#F3EEE2,stroke-width:2px

Importantly, geographic affinity in Linera is not conditioned to the time of the day allowing applications to deliver similar performance at night and during working hours. Yet, Linera validators have the flexibility to downsize and upsize their pool of machines at will to save costs.

How do Linera microchains compare to traditional multi-chain protocols?

Linera is the first blockchain designed to run a virtually unlimited number of chains in parallel, including one dedicated user chain per user wallet.

In traditional multi-chain protocols, each chain usually runs a full blockchain protocol in a separate set of validators. Creating a new chain or exchanging messages between chains is expensive. As a result, the total number of chains is generally limited.

In contrast, Linera is designed to run as many microchains as needed:

-

Users only create blocks in their chain when needed;

-

Creating a microchain does not require onboarding validators;

-

All chains have the same level of security;

-

Microchains communicate efficiently using the internal networks of validators;

-

Validators are internally sharded (like a regular web service) and may adjust their capacity elastically by adding or removing internal workers.

-

Users may run heavy transactions in their microchain without affecting other users.

Main protocol features

Infrastructure

-

Finality time under 0.5 seconds for most blocks, including a certificate of execution.

-

New microchains created in one transaction from an existing chain.

-

No theoretical limit in the number of microchains, hence the number of transactions per second (TPS).

-

Bridge-friendly block headers compatible with EVM signatures

On-chain applications

-

Rich programming model allowing applications to distribute computation across chains using asynchronous messages, shared immutable data, and event streams.

-

Full synchronous composability inside each microchain.

-

Support for heavy (multi-second) transactions and direct oracle queries to external web services and data storage layers.

Web client and wallet infrastructure

-

Real-time push-notifications from validators to web clients.

-

Block synchronization and VM execution for selected microchains, allowing instant pre-confirmation of user transactions.

-

Trustless reactive programming using familiar Web2 frameworks.

-

On-chain applications programmed in Rust to run on Wasm, or Solidity on EVM(*).

Features marked with (*) are under active development on the main branch.

Roadmap

This section outlines our current technical roadmap. Please note that this roadmap is provided for informational purposes only and is subject to change at any time.

%%{init: { 'logLevel': 'debug', 'theme': 'dark' } }%%

timeline

section 2024

Testnet 1 (Archimedes): Multi-user chains

: Data blobs

: Fees

section 2025+

Testnet 2 (Babbage): Web client

: POW public chains

: Block headers

Testnet 3 (Conway): Browser extension & wallet connect

: EVM support

: Block explorer

Testnet 4: Governance

: Tokenomics

: Security audits

Mainnet

Testnet #1 (released Nov 2024)

Codename: Archimedes

SDK

-

Released Rust SDK v0.13+

-

First Web demos running a Linera client in the browser

-

Blob storage for user data

Core protocol

-

Blob storage for application bytecode and user data

-

Multi-user chains (e.g. used in on-chain game demo)

-

Initial support for fees

Infrastructure

-

Fixed number of workers per validator

-

Onboarding of 20+ external validators

Testnet #2 (released Apr 2025)

Codename: Babbage

SDK

-

Official Web client framework

-

Support for native oracles: http queries and non-deterministic computations

-

Support for POW public chains

-

Simplified user and application addresses

Core protocol

-

More scalable reconfigurations

-

No more "request-application" operations

-

Bridge-friendly block headers compatible with EVM signatures

Infrastructure

-

Better hotfix release process

-

Support for resizing workers offline

Testnet #3 (released Sep 2025)

Codename: Conway

SDK

-

Wallet connect (signing demo with external wallet)

-

Event streams (deprecating pub/sub channels)

-

Experimental support for EVM

-

Compatibility with EVM addresses

Core protocol

-

More scalable client with partial chain execution and optimized block synchronization

-

Execution cache for faster server-side and client-side block execution

-

Simplify chain creation and support externally created microchains

Infrastructure

-

High-TPS configuration

-

Software service to support block indexing

Testnet #4

SDK

-

Stable support for EVM

-

Transaction scripts

-

Application upgradability

Core protocol

-

Protocol upgradability, including block format, virtual machines, and system APIs

-

Governance chain

-

Final tokenomics and fees

-

Storage durability

Infrastructure

-

Network performance measurements and validator incentives

-

Security audits

Mainnet and beyond

SDK

-

Account abstraction and fee masters

-

Linera light clients for other contract languages (e.g. Solidity, Sui Move)

Core Protocol

-

Permissionless auditing protocol

-

Performance improvements

Infrastructure

-

Block indexing and block explorer

-

Walrus archives

-

Support for dynamic shard assignment and elasticity

-

Geographic sharding

-

Support for more cloud vendors

-

Native bridges

Getting started

In this section, we will cover the necessary steps to install the Linera toolchain and give a short example to get started with the Linera SDK.

Installation

Let's start with the installation of the Linera development tools.

Overview

The Linera toolchain consists of several crates:

-

linera-sdkis the main library used to program Linera applications in Rust. -

linera-servicedefines a number of binaries, notablylinerathe main client tool used to operate developer wallets and start local testing networks. -

linera-storage-serviceprovides a simple database used to run local validator nodes for testing and development purposes.

Requirements

The operating systems currently supported by the Linera toolchain can be summarized as follows:

| Linux x86 64-bit | Mac OS (M1 / M2) | Mac OS (x86) | Windows |

|---|---|---|---|

| ✓ Main platform | ✓ Working | ✓ Working | Untested |

The main prerequisites to install the Linera toolchain are Rust, Wasm, and Protoc. They can be installed as follows on Linux:

-

Rust and Wasm

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | shrustup target add wasm32-unknown-unknown

-

Protoc

curl -LO https://github.com/protocolbuffers/protobuf/releases/download/v21.11/protoc-21.11-linux-x86_64.zipunzip protoc-21.11-linux-x86_64.zip -d $HOME/.local- If

~/.localis not in your path, add it:export PATH="$HOME/.local/bin:$PATH"

-

On certain Linux distributions, you may have to install development packages such as

g++,libclang-devandlibssl-dev.

For MacOS support and for additional requirements needed to test the Linera protocol itself, see the installation section on GitHub.

This manual was tested with the following Rust toolchain:

[toolchain]

channel = "1.86.0"

components = [ "clippy", "rustfmt", "rust-src" ]

targets = [ "wasm32-unknown-unknown" ]

profile = "minimal"

Installing from crates.io

You may install the Linera binaries with

cargo install --locked linera-storage-service@0.16.0

cargo install --locked linera-service@0.16.0

and use linera-sdk as a library for Linera Wasm applications:

cargo add linera-sdk@0.16.0

The version number 0.16.0 corresponds to the

current Testnet of Linera. The minor version may change frequently but should

not induce breaking changes.

Installing from GitHub

Download the source from GitHub:

git clone https://github.com/linera-io/linera-protocol.git

cd linera-protocol

git checkout -t origin/testnet_conway # Current release branch

To install the Linera toolchain locally from source, you may run:

cargo install --locked --path linera-storage-service

cargo install --locked --path linera-service

Alternatively, for developing and debugging, you may instead use the binaries

compiled in debug mode, e.g. using export PATH="$PWD/target/debug:$PATH".

This manual was tested against the following commit of the repository:

1996bc5067e19cbde2b036120cea9e22dc57e51c

Getting help

If installation fails, reach out to the team (e.g. on Discord) to help troubleshoot your issue or create an issue on GitHub.

Hello, Linera

In this section, you will learn how to initialize a developer wallet, interact with the current Testnet, run a local development network, then compile and deploy your first application from scratch.

By the end of this section, you will have a microchain on the Testnet and/or on your local network, and a working application that can be queried using GraphQL.

Creating a wallet on the latest Testnet

To interact with the latest Testnet, you will need a developer wallet, a new microchain, and some tokens. These can be all obtained at once by querying the Testnet's faucet service as follows:

linera wallet init --faucet https://faucet.testnet-conway.linera.net

linera wallet request-chain --faucet https://faucet.testnet-conway.linera.net

If you obtain an error message instead, make sure to use a Linera toolchain compatible with the current Testnet.

A Linera Testnet is a deployment of the Linera protocol used for testing. A deployment

consists of a number of validators, each of which runs

a frontend service (aka. linera-proxy), a number of workers (aka. linera-server), and

a shared database (by default linera-storage-service).

Using a local test network

Another option is to start your own local development network. To do so, run the following command:

linera net up --with-faucet --faucet-port 8080

This will start a validator with the default number of shards and start a faucet.

Now, we're ready to create a developer wallet by running the following command in a separate shell:

linera wallet init --faucet http://localhost:8080

linera wallet request-chain --faucet http://localhost:8080

A wallet is valid for the lifetime of its network. Every time a local network is restarted, the wallet needs to be removed and created again.

Working with several developer wallets and several networks

By default, the linera command looks for wallet files located in a

configuration path determined by your operating system. If you prefer to choose

the location of your wallet files, you may optionally set the variables

LINERA_WALLET, LINERA_KEYSTORE and LINERA_STORAGE as follows:

DIR=$HOME/my_directory

mkdir -p $DIR

export LINERA_WALLET="$DIR/wallet.json"

export LINERA_KEYSTORE="$DIR/keystore.json"

export LINERA_STORAGE="rocksdb:$DIR/wallet.db"

Choosing such a directory can be useful to work with several networks because a wallet is always specific to the network where it was created.

We refer to the wallets created by the linera CLI as "developer wallets" because

they are operated from a developer tool and merely meant for testing and development.

Production-grade user wallets are generally operated by a browser extension, a mobile application, or a hardware device.

Interacting with the Linera network

To check that the network is working, you can synchronize your chain with the rest of the network and display the chain balance as follows:

linera sync

linera query-balance

You should see an output number, e.g. 10.

Building an example application

Applications running on Linera are Wasm bytecode. Each validator and client has a built-in Wasm virtual machine (VM) which can execute bytecode.

Let's build the counter application from the examples/ subdirectory of the

Linera testnet

branch:

cd examples/counter && cargo build --release --target wasm32-unknown-unknown

Publishing your application

You can publish the bytecode and create an application using it on your local

network using the linera client's publish-and-create command and provide:

- The location of the contract bytecode

- The location of the service bytecode

- The JSON encoded initialization arguments

linera publish-and-create \

target/wasm32-unknown-unknown/release/counter_{contract,service}.wasm \

--json-argument "42"

Congratulations! You've published your first application on Linera!

Querying your application

Now let's query your application to get the current counter value. To do that, we need to use the client running in service mode. This will expose a bunch of APIs locally which we can use to interact with applications on the network.

linera service --port 8080

Navigate to http://localhost:8080 in your browser to access GraphiQL, the

GraphQL IDE. We'll look at this in more detail in a

later section; for now, list

the applications deployed on your default chain by running:

query {

applications(chainId: "...") {

id

description

link

}

}

where ... are replaced by the chain ID shown by linera wallet show.

Since we've only deployed one application, the results returned have a single entry.

At the bottom of the returned JSON there is a field link. To interact with

your application copy and paste the link into a new browser tab.

Finally, to query the counter value, run:

query {

value

}

This will return a value of 42, which is the initialization argument we

specified when deploying our application.

Core concepts

We now describe some of the core concepts of the Linera protocol in greater details.

Microchains

This section provides an introduction to microchains, the main building block of the Linera Protocol. For a more formal treatment refer to the whitepaper.

Background

A microchain is a chain of blocks describing successive changes to a shared state. We will use the terms chain and microchain interchangeably. Linera microchains are similar to the familiar notion of blockchain, with the following important specificities:

-

An arbitrary number of microchains can coexist in a Linera network, all sharing the same set of validators and the same level of security. Creating a new microchain only takes one transaction on an existing chain.

-

The task of proposing new blocks in a microchain can be assumed either by validators or by end users (or rather their wallets) depending on the configuration of a chain. Specifically, microchains can be single-owner, multi-owner, or public, depending on who is authorized to propose blocks.

Cross-chain messaging

In traditional networks with a single blockchain, every transaction can access the application's entire execution state. This is not the case in Linera where the state of an application is spread across multiple microchains, and the state on any individual microchain is only affected by the blocks of that microchain.

Cross-chain messaging is a way for different microchains to communicate with each other asynchronously. This method allows applications and data to be distributed across multiple chains for better scalability. When an application on one chain sends a message to itself on another chain, a cross-chain request is created. These requests are implemented using remote procedure calls (RPCs) within the validators' internal network, ensuring that each request is executed only once.

Instead of immediately modifying the target chain, messages are placed first in the target chain's inbox. When an owner of the target chain creates its next block in the future, they may reference a selection of messages taken from the current inbox in the new block. This executes the selected messages and applies their messages to the chain state.

Below is an example set of chains sending asynchronous messages to each other over consecutive blocks.

┌───┐ ┌───┐ ┌───┐

Chain A │ ├────►│ ├────►│ │

└───┘ └───┘ └───┘

▲

┌─────────┘

│

┌───┐ ┌─┴─┐ ┌───┐

Chain B │ ├────►│ ├────►│ │

└───┘ └─┬─┘ └───┘

│ ▲

│ │

▼ │

┌───┐ ┌───┐ ┌─┴─┐

Chain C │ ├────►│ ├────►│ │

└───┘ └───┘ └───┘

The Linera protocol allows receivers to discard messages but not to change the ordering of selected messages inside the communication queue between two chains. If a selected message fails to execute, the wallet will automatically reject it when proposing the receiver's block. The current implementation of the Linera client always selects as many messages as possible from inboxes, and never discards messages unless they fail to execute.

Chain ownership semantics

Active chains can have one or multiple owners. Chains with zero owners are permanently deactivated.

In Linera, the validators guarantee safety: On each chain, at each height, there is at most one unique block.

But liveness—actually adding blocks to a chain at all—relies on the owners. There are different types of rounds and owners, optimized for different use cases:

- First an optional fast round, where a super owner can propose blocks that get confirmed with very particularly low latency, optimal for single-owner chains with no contention.

- Then a number of multi-leader rounds, where all regular owners can propose blocks. This works well even if there is occasional, temporary contention: an owner using multiple devices, or multiple people using the same chain infrequently.

- And finally single-leader rounds: These give each regular chain owner a time slot in which only they can propose a new block, without being hindered by any other owners' proposals. This is ideal for chains with many users that are trying to commit blocks at the same time.

The number of multi-leader rounds is configurable: On chains with fluctuating levels of activity, this allows the system to dynamically switch to single-leader mode whenever all multi-leader rounds fail during periods of high contention. Chains that very often have high activity from multiple owners can set the number of multi-leader rounds to 0.

For more detail and examples on how to open and close chains, see the wallet section on chain management.

Wallets

As in traditional blockchains, Linera wallets are in charge of holding user private keys. However, instead of signing transactions, Linera wallets are meant to sign blocks and propose them to extend the chains owned by their users.

In practice, wallets include a node which tracks a subset of Linera chains. We will see in the next section how a Linera wallet can run a GraphQL service to expose the state of its chains to web frontends.

The command-line tool

linerais the main way for developers to interact with a Linera network and manage the developer wallets present locally on the system.

Note that this command-line tool is intended mainly for development purposes. Our goal is that end users eventually manage their wallets in a browser extension.

Creating a developer wallet

The simplest way to obtain a wallet with the linera CLI tool is to run the

following command:

linera wallet init --faucet $FAUCET_URL

linera wallet request-chain --faucet $FAUCET_URL

where $FAUCET_URL represents the URL of the network's faucet (see

previous section)

Selecting a wallet

The private state of a wallet is conventionally stored in a file wallet.json,

keys are stored in keystore.db, while the state of its node is stored in a

file wallet.db.

To switch between wallets, you may use the --wallet, --keystore, and

--storage options of the linera tool, e.g. as in

linera --wallet wallet2.json --keystore keystore2.json --storage rocksdb:wallet2.db:runtime:default.

You may also define the environment variables LINERA_STORAGE,

LINERA_KEYSTORE, and LINERA_WALLET to the same effect. E.g.

LINERA_STORAGE=rocksdb:$PWD/wallet2.db:runtime:default and

LINERA_WALLET=$PWD/wallet2.json.

Finally, if LINERA_STORAGE_$I, LINERA_KEYSTORE_$I, and LINERA_WALLET_$I

are defined for some number I, you may call linera --with-wallet $I (or

linera -w $I for short).

Chain management

Listing chains

To list the chains present in your wallet, you may use the command show:

linera wallet show

╭──────────────────────────────────────────────────────────────────┬──────────────────────────────────────────────────────────────────────────────────────╮

│ Chain ID ┆ Latest Block │

╞══════════════════════════════════════════════════════════════════╪══════════════════════════════════════════════════════════════════════════════════════╡

│ 668774d6f49d0426f610ad0bfa22d2a06f5f5b7b5c045b84a26286ba6bce93b4 ┆ Public Key: 3812c2bf764e905a3b130a754e7709fe2fc725c0ee346cb15d6d261e4f30b8f1 │

│ ┆ Owner: c9a538585667076981abfe99902bac9f4be93714854281b652d07bb6d444cb76 │

│ ┆ Block Hash: - │

│ ┆ Timestamp: 2023-04-10 13:52:20.820840 │

│ ┆ Next Block Height: 0 │

├╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌┼╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌╌┤

│ 91c7b394ef500cd000e365807b770d5b76a6e8c9c2f2af8e58c205e521b5f646 ┆ Public Key: 29c19718a26cb0d5c1d28102a2836442f53e3184f33b619ff653447280ccba1a │

│ ┆ Owner: efe0f66451f2f15c33a409dfecdf76941cf1e215c5482d632c84a2573a1474e8 │

│ ┆ Block Hash: 51605cad3f6a210183ac99f7f6ef507d0870d0c3a3858058034cfc0e3e541c13 │

│ ┆ Timestamp: 2023-04-10 13:52:21.885221 │

│ ┆ Next Block Height: 1 │

╰──────────────────────────────────────────────────────────────────┴──────────────────────────────────────────────────────────────────────────────────────╯

Each row represents a chain present in the wallet. On the left is the unique identifier on the chain, and on the right is metadata for that chain associated with the latest block.

Default chain

Each wallet has a default chain that all commands apply to unless you specify

another --chain on the command line.

The default chain is set initially, when the first chain is added to the wallet. You can check the default chain for your wallet by running:

linera wallet show

The chain ID which is in green text instead of white text is your default chain.

To change the default chain for your wallet, use the set-default command:

linera wallet set-default <chain-id>

Creating chains

In the Linera protocol, chains are generally created using a transaction from an existing chain.

Create a chain from an existing one for your own wallet

To create a new chain from the default chain of your wallet, you can use the

open-chain command:

linera open-chain

This will create a new chain and add it to the wallet. Use the wallet show

command to see your existing chains.

Create a new chain from an existing one for another wallet

Creating a chain for another wallet requires an extra two steps. Let's

initialize a second wallet:

linera --wallet wallet2.json --storage rocksdb:linera2.db wallet init --faucet $FAUCET_URL

First wallet2 must create an unassigned keypair. The public part of that

keypair is then sent to the wallet who is the chain creator.

linera --wallet wallet2.json keygen

6443634d872afbbfcc3059ac87992c4029fa88e8feb0fff0723ac6c914088888 # this is the public key for the unassigned keypair

Next, using the public key, wallet can open a chain for wallet2.

linera open-chain --to-public-key 6443634d872afbbfcc3059ac87992c4029fa88e8feb0fff0723ac6c914088888

e476187f6ddfeb9d588c7b45d3df334d5501d6499b3f9ad5595cae86cce16a65010000000000000000000000

fc9384defb0bcd8f6e206ffda32599e24ba715f45ec88d4ac81ec47eb84fa111

The first line is the message ID specifying the cross-chain message that creates the new chain. The second line is the new chain's ID.

Finally, to add the chain to wallet2 for the given unassigned key we use the

assign command:

linera --wallet wallet2.json assign --key 6443634d872afbbfcc3059ac87992c4029fa88e8feb0fff0723ac6c914088888 --message-id e476187f6ddfeb9d588c7b45d3df334d5501d6499b3f9ad5595cae86cce16a65010000000000000000000000

Note that in the case of a test network with a faucet, the new wallet and the new chain could also have been created from the faucet directly using:

linera --wallet wallet2.json --storage rocksdb:linera2.db wallet init --faucet $FAUCET_URL

linera --wallet wallet2.json --storage rocksdb:linera2.db wallet request-chain --faucet $FAUCET_URL

Opening a chain with multiple users

The open-chain command is a simplified version of open-multi-owner-chain,

which gives you fine-grained control over the set and kinds of owners and rounds

for the new chain, and the timeout settings for the rounds. E.g. this creates a

chain with two owners and two multi-leader rounds.

linera open-multi-owner-chain \

--chain-id e476187f6ddfeb9d588c7b45d3df334d5501d6499b3f9ad5595cae86cce16a65010000000000000000000000 \

--owner-public-keys 6443634d872afbbfcc3059ac87992c4029fa88e8feb0fff0723ac6c914088888 \

ca909dcf60df014c166be17eb4a9f6e2f9383314a57510206a54cd841ade455e \

--multi-leader-rounds 2

The change-ownership command offers the same options to add or remove owners

and change round settings for an existing chain.

Node Service

So far we've seen how to use the Linera client treating it as a binary in your terminal. However, the client also acts as a node which:

- Executes blocks

- Exposes a GraphQL API and IDE for dynamically interacting with applications and the system

- Listens for notifications from validators and automatically updates local chains.

To interact with the node service, run linera in service mode:

linera service

This will run the node service on port 8080 by default (this can be overridden

using the --port flag).

A note on GraphQL

Linera uses GraphQL as the query language for interfacing with different parts of the system. GraphQL enables clients to craft queries such that they receive exactly what they want and nothing more.

GraphQL is used extensively during application development, especially to query the state of an application from a front-end for example.

To learn more about GraphQL check out the official docs.

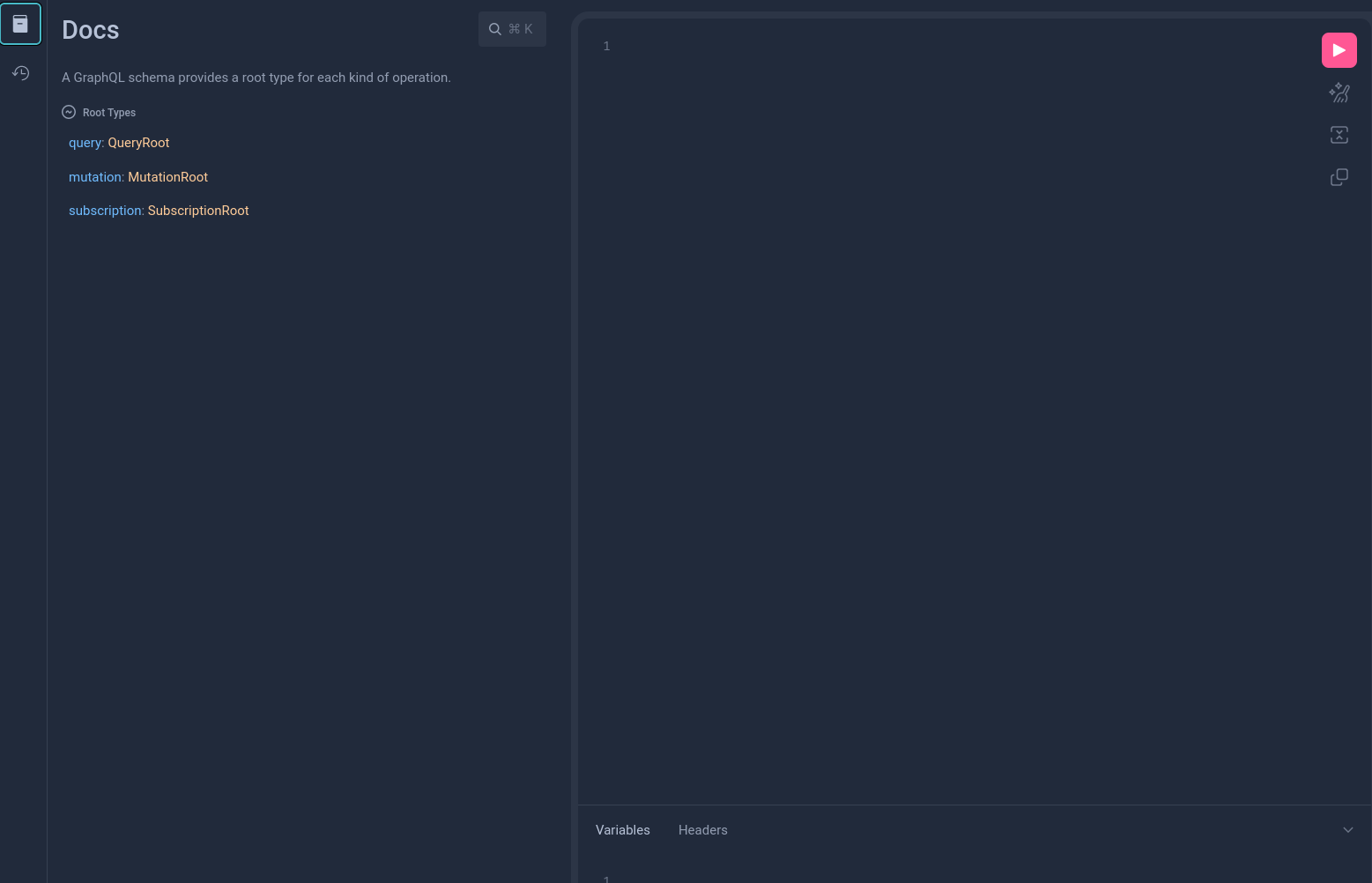

GraphiQL IDE

Conveniently, the node service exposes a GraphQL IDE called GraphiQL. To use

GraphiQL start the node service and navigate to localhost:8080/.

Using the schema explorer on the left of the GraphiQL IDE you can dynamically explore the state of the system and your applications.

GraphQL system API

The node service also exposes a GraphQL API which corresponds to the set of

system operations. You can explore the full set of operations by clicking on

MutationRoot.

GraphQL application API

To interact with an application, we run the Linera client in service mode. It

exposes a GraphQL API for every application running on any owned chain at

localhost:8080/chains/<chain-id>/applications/<application-id>.

Navigating there with your browser will open a GraphiQL interface which enables you to graphically explore the state of your application.

Connecting AI agents to Linera applications in MCP

Most AI agents understand the Model Context Protocol (MCP for short).

GraphQL service can be turned an MCP server using Apollo MCP Server.

More information can be found in the

mcp-demo repository.

Applications

The programming model of Linera is designed so that developers can take advantage of microchains to scale their applications.

Linera uses the WebAssembly (Wasm) Virtual Machine to execute user applications. Currently, the Linera SDK is focused on the Rust programming language for the backend and TypeScript for the frontend.

Linera applications are structured using the familiar notion of Rust crate: the external interfaces of an application (including instantiation parameters, operations and messages) generally go into the library part of its crate, while the core of each application is compiled into binary files for the Wasm architecture.

The Application deployment lifecycle

Linera Applications are designed to be powerful yet re-usable. For this reason there is a distinction between the bytecode and an application instance on the network.

Applications undergo a lifecycle transition aimed at making development easy and flexible:

- The bytecode is built from a Rust project with the

linera-sdkdependency. - The bytecode is published to the network on a microchain, and assigned an identifier.

- A user can create a new application instance, by providing the bytecode identifier and instantiation arguments. This process returns an application identifier which can be used to reference and interact with the application.

- The same bytecode identifier can be used as many times needed by as many users needed to create distinct applications.

Importantly, the application deployment lifecycle is abstracted from the user, and an application can be published with a single command:

linera publish-and-create <contract-path> <service-path> <init-args>

This will publish the bytecode as well as instantiate the application for you.

Anatomy of an application

An application is broken into two major components, the contract and the service.

The contract is gas-metered, and is the part of the application which executes operations and messages, make cross-application calls and modifies the application's state. The details are covered in more depth in the application backend guide.

The service is non-metered and read-only. It is used primarily to query the state of an application and populate the presentation layer (think front-end) with the data required for a user interface.

Operations and messages

For this section we'll be using a simplified version of the example application called "fungible" where users can send tokens to each other.

At the system-level, interacting with an application can be done via operations and messages.

Operations are defined by an application developer and each application can have a completely different set of operations. Chain owners then actively create operations and put them in their block proposals to interact with an application. Other applications may also call the application by providing an operation for it to execute, this is called a cross-application call and always happens within the same chain. Operations for cross-application calls may return a response value back to the caller.

Taking the "fungible token" application as an example, an operation for a user to transfer funds to another user would look like this:

extern crate serde;

extern crate linera_sdk;

use serde::{Deserialize, Serialize};

use linera_sdk::linera_base_types::*;

#[derive(Debug, Deserialize, Serialize)]

pub enum Operation {

/// A transfer from a (locally owned) account to a (possibly remote) account.

Transfer {

owner: AccountOwner,

amount: Amount,

target_account: Account,

},

// Meant to be extended here

}Messages result from the execution of operations or other messages. Messages can be sent from one chain to another, always within the same application. Block proposers also actively include messages in their block proposal, but unlike with operations, they are only allowed to include them in the right order (possibly skipping some), and only if they were actually created by another chain (or by a previous block of the same chain). Messages that originate from the same transaction are included as a single transaction in the receiving block.

In our "fungible token" application, a message to credit an account would look like this:

extern crate serde;

extern crate linera_sdk;

use serde::{Deserialize, Serialize};

use linera_sdk::linera_base_types::*;

#[derive(Debug, Deserialize, Serialize)]

pub enum Message {

Credit { owner: AccountOwner, amount: Amount },

// Meant to be extended here

}Messages can be marked as tracked by their sender. When a tracked message is rejected, the message is marked as bouncing and sent back to the sender chain. This is useful to avoid dropping assets in case the receiver is not able or wanting to accept them.

Composing applications

Within a chain, Linera applications call each other synchronously. The transactions of a block initiates the first call to an application. The atomicity of message bundles ensures that the messages created by a transaction are either all received or all rejected by the receiver chain.

The following example shows a common design pattern where a high-level application (here, a crowd-funding app) calls into another application (here an ERC-20-like application managing a fungible token), resulting in a bundle of two messages.

flowchart LR

subgraph user_chain["User chain"]

block("operation in block") -- calls (with signer) (1) --> app11

subgraph exec_user["Execution state"]

app11["crowdfunding app"] -- calls (with signer) (2) --> app21["fungible token app"]

end

end

subgraph app_chain["Crowdfunding app chain"]

subgraph exec_app["Execution state"]

app12["crowdfunding app"] -- calls (7) --> app22["fungible token app"]

end

bundle("Incoming message bundle<br>[assets, pledge]")

end

app11 -- send pledge (4) --> bundle

app21 -- send assets (3) --> bundle

bundle -- receive pledge (6) --> app12

bundle -- receive assets (5) --> app22

%% Styling

style user_chain fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style app_chain fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style exec_user fill:#0e2630,stroke:#A0E3E2,stroke-width:1px,stroke-dasharray:2 2,rx:8,ry:8

style exec_app fill:#0e2630,stroke:#A0E3E2,stroke-width:1px,stroke-dasharray:2 2,rx:8,ry:8

style app11 fill:#4A7B75,stroke:#70D4D3,stroke-width:2px

style app12 fill:#4A7B75,stroke:#70D4D3,stroke-width:2px

style app21 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style app22 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style bundle fill:#5A8269,stroke:#D2E8C8,stroke-width:2px

When a user proposes a block in their user chain, operations inherit the authentication of the user (aka signer or origin) that signed the block. Calls may optionally forward this authentication, for instance to allow the transfer of assets.

Authentication

Operations in a block are always authenticated and messages may be authenticated. The signer of a block becomes the authenticator of all the operations in that block. As operations are being executed by applications, messages can be created to be sent to other chains. When they are created, they can be configured to be authenticated. In that case, the message receives the same authentication as the operation that created it. If handling an incoming message creates new messages, those may also be configured to have the same authentication as the received message.

In other words, the block signer can have its authority propagated across chains through series of messages. This allows applications to safely store user state on chains that the user may not have the authority to produce blocks. The application may also allow only the authorized user to change that state, and not even the chain owner is able to override that.

The figure below shows four chains (A, B, C, D) and some blocks produced in them. In this example, each chain is owned by a single owner (aka. address). Owners are in charge of producing blocks and sign new blocks using their signing keys. Some blocks show the operations and incoming messages they accept, where the authentication is shown inside parenthesis. All operations produced are authenticated by the block proposer, and if these are all single user chains, the proposer is always the chain owner. Messages that have authentication use the one from the operation or message that created it.

One example in the figure is that chain A produced a block with Operation 1,

which is authenticated by the owner of chain A (written (a)). That operation

sent a message to chain B, and assuming the message was sent with the

authentication forwarding enabled, it is received and executed in chain B with

the authentication of (a). Another example is that chain D produced a block

with Operation 2, which is authenticated by the owner of chain D (written

(d)). That operation sent a message to chain C, which is executed with

authentication of (d) like the example before. Handling that message in chain

C produced a new message, which was sent to chain B. That message, when received

by chain B is executed with the authentication of (d).

┌───┐ ┌─────────────────┐ ┌───┐

Chain A owned by (a) │ ├────►│ Operation 1 (a) ├────►│ │

└───┘ └────────┬────────┘ └───┘

│

└────────────┐

▼

┌──────────────────────────┐

┌───┐ ┌───┐ │ Message from chain A (a) │

Chain B owned by (b) │ ├────►│ ├────►│ Message from chain C (d) |

└───┘ └───┘ │ Operation 3 (b) │

└──────────────────────────┘

▲

┌────────┘

│

┌───┐ ┌──────────────────────────┐ ┌───┐

Chain C owned by (c) │ ├────►│ Message from chain D (d) ├────►│ │

└───┘ └──────────────────────────┘ └───┘

▲

┌───────────┘

│

┌─────────────────┐ ┌───┐ ┌───┐

Chain D owned by (d) │ Operation 2 (d) ├────►│ ├────►│ │

└─────────────────┘ └───┘ └───┘

An example where this is used is in the Fungible application, where a Claim

operation allows retrieving money from a chain the user does not control (but

the user still trusts will produce a block receiving their message). Without the

Claim operation, users would only be able to store their tokens on their own

chains, and multi-owner and public chains would have their tokens shared between

anyone able to produce a block.

With the Claim operation, users can store their tokens on another chain where

they're able to produce blocks or where they trust the owner will produce blocks

receiving their messages. Only they are able to move their tokens, even on

chains where ownership is shared or where they are not able to produce blocks.

Common Design Patterns

We now explore some common design patterns to take advantage of microchains.

Applications with only user chains

Some applications such as payments only require user chains, hence are fully horizontally scalable:

flowchart LR

user1(["user 1"]) -- initiates transfer --> chain1

subgraph validators_only_users["Validators"]

chain1["user chain 1"] -- "sends assets" --> chain2["user chain 2"]

end

chain2 -- notifies --> user2(["user 2"])

%% Styling

style validators_only_users fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style user1 fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style user2 fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style chain1 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain2 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

Example: the fungible demo application of the Linera codebase.

Client/server applications

Pre-existing applications (e.g. written in Solidity) generally run on a single chain of blocks for all users. Those can be embedded in an app chain to act as a service.

flowchart LR

user1(["user 1"]) -- initiates request --> chain1

user1 ~~~ chain1

provider(["block producer"]) -- initiate response(s) --> chain3

chain1 -- notifies --> user1

chain3 -- notifies --> provider

subgraph validators_cs["Validators"]

chain1["user chain 1"] -- "sends request" --> chain3

chain1 ~~~ chain3

chain2["user chain 2"] --> chain3

chain3["app chain"] -- "sends response" --> chain1

end

%% Styling

style validators_cs fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style user1 fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style provider fill:#A0736B,stroke:#D2E8C8,stroke-width:2px

style chain1 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain2 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain3 fill:#4A7B75,stroke:#70D4D3,stroke-width:2px

Depending on the nature of the application, the blocks produced in the app chain may be restricted to only contain messages (no operations). This is to ensure that block producers have no influence on a chain, other than selecting incoming messages.

Example: the crowd-funding demo application of the Linera codebase.

Using personal chains to scale applications

User chains are useful to store the assets of their users and initiate requests to app chains. Yet, oftentimes, they can also help applications scale horizontally by taking work out of the app chains.

flowchart LR

user1(["user 1"]) -- submits ZK proof --> chain1

subgraph microchains_scale["Microchains"]

chain1["user chain 1"]

chain0["airdrop chain"]

chain1 -- "sends trusted message《ZK proof is valid》" --> chain0

chain1 ~~~ chain0

chain0 -- "sends tokens" --> chain1

chain2["user chain 2"] --> chain0

end

%% Styling

style microchains_scale fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style user1 fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style chain1 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain2 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain0 fill:#4A7B75,stroke:#70D4D3,stroke-width:2px

One of the benefits of personal chains is to enable transactions that would be too slow or not deterministic enough for traditional blockchains, including:

- Validating ZK proofs,

- Sending web queries to external oracle services (e.g. AI inference) and other API providers,

- Downloading data blobs from external data availability (”DA”) layers and computing app-specific invariants.

Example (unfinished): the airdrop demo application of the Linera project.

Using temporary chains to scale applications

Temporary chains can be created on demand and configured to accept blocks from specific users.

The following diagram allows a virtually unlimited number of games (e.g. chess game) to be spawned for a given tournament.

flowchart LR

subgraph microchains_scale["Microchains"]

chain1["user chain 1"] <--> chain3

chain2["user chain 2"] <--> chain3

chain3["tournament app chain"] -- creates --> chain0["temporary game chain"]

chain0 -- reports result --> chain3

end

user1(["user 1"]) -- request game --> chain1

user2(["user 2"]) -- request game --> chain2

user1 -- plays --> chain0

user2 -- plays --> chain0

%% Styling

style microchains_scale fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style user1 fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style user2 fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style chain1 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain2 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain3 fill:#4A7B75,stroke:#70D4D3,stroke-width:2px

Example: the hex-game demo application of the Linera codebase.

Just-in-time oracles

We have seen that Linera clients are connected and don’t rely on external RPC providers to read on-chain data from the chain. This ability to receive secure, censorship-resistant notifications and read data from the network is a game changer allowing on-chain applications to query certain clients in real time.

For instance, clients may be running an AI oracle off-chain in a trusted execution environment (TEE), allowing on-chain application to extract important information form the Internet.

flowchart LR

subgraph validators_only_users["Validators"]

chain2 -- oracle response --> chain1

chain1["app chain"] -- "oracle query" --> chain2["oracle chain"]

end

subgraph tee["Oracle TEE"]

user2(["oracle client"]) <--> ai["AI oracle"]

end

chain2 -- notifies --> user2

user2 -- submit response --> chain2

ai <--> web((Web))

%% Styling

style validators_only_users fill:#1A4456,stroke:#70D4D3,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style tee fill:#1A4456,stroke:#A0E3E2,stroke-width:2px,stroke-dasharray:3 3,rx:10,ry:10

style user2 fill:#8B7355,stroke:#EDE4D2,stroke-width:2px

style ai fill:#A0736B,stroke:#D2E8C8,stroke-width:2px

style chain1 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

style chain2 fill:#3A6B7A,stroke:#A0E3E2,stroke-width:2px

Writing Linera Applications

In this section, we'll be exploring how to create Web3 applications using the Linera SDK.

We'll use a simple "counter" application as a running example.

We'll focus on the backend of the application, which consists of two main parts: a smart contract and its GraphQL service.

Both the contract and the service of an application are written in Rust using

the crate linera-sdk, and compiled to

Wasm bytecode.

This section should be seen as a guide versus a reference manual for the SDK. For the reference manual, refer to the documentation of the crate.

Creating a Linera Project

To create your Linera project, use the linera project new command. The command

should be executed outside the linera-protocol folder. It sets up the

scaffolding and requisite files:

linera project new my-counter

linera project new bootstraps your project by creating the following key

files:

Cargo.toml: your project's manifest filled with the necessary dependencies to create an app;rust-toolchain.toml: a file with configuration for Rust compiler.

NOTE: currently the latest Rust version compatible with our network is

1.86.0. Make sure it's the one used by your project.

src/lib.rs: the application's ABI definition;src/state.rs: the application's state;src/contract.rs: the application's contract, and the binary target for the contract bytecode;src/service.rs: the application's service, and the binary target for the service bytecode.

When writing Linera applications it is a convention to use your app's name as a prefix for names of

trait,struct, etc. Hence, in the following manual, we will useCounterContract,CounterService, etc.

Creating the Application State

The state of a Linera application consists of onchain data that are persisted between transactions.

The struct which defines your application's state can be found in

src/state.rs. To represent our counter, we're going to use a u64 integer.

While we could use a plain data-structure for the entire application state:

struct Counter {

value: u64

}in general, we prefer to manage persistent data using the concept of "views":

Views allow an application to load persistent data in memory and stage modifications in a flexible way.

Views resemble the persistent objects of an ORM framework, except that they are stored as a set of key-value pairs (instead of a SQL row).

In this case, the struct in src/state.rs should be replaced by

extern crate linera_sdk;

extern crate async_graphql;

use linera_sdk::linera_base_types::*;

use linera_sdk::*;

use std::collections::HashSet;

use linera_sdk::views::{linera_views, RegisterView, RootView, ViewStorageContext};

use crate::linera_sdk::views::View as _;

/// The application state.

#[derive(RootView, async_graphql::SimpleObject)]

#[view(context = ViewStorageContext)]

pub struct Counter {

pub value: RegisterView<u64>,

// Additional fields here will get their own key in storage.

}and the occurrences of Application in the rest of the project should be

replaced by Counter.

The derive macro async_graphql::SimpleObject is related to GraphQL queries

discussed in the next section.

A RegisterView<T> supports modifying a single value of type T. Other data

structures available in the library

linera_views include:

LogViewfor a growing vector of values;QueueViewfor queues;MapViewandCollectionViewfor associative maps; specifically,MapViewin the case of static values, andCollectionViewwhen values are other views.

For an exhaustive list of the different constructions, refer to the crate documentation.

Defining the ABI

The Application Binary Interface (ABI) of a Linera application defines how to interact with this application from other parts of the system. It includes the data structures, data types, and functions exposed by on-chain contracts and services.

ABIs are usually defined in src/lib.rs and compiled across all architectures

(Wasm and native).

For a reference guide, check out the documentation of the crate.

Defining a marker struct

The library part of your application (generally in src/lib.rs) must define a

public empty struct that implements the Abi trait.

struct CounterAbi;The Abi trait combines the ContractAbi and ServiceAbi traits to include

the types that your application exports.

/// A trait that includes all the types exported by a Linera application (both contract

/// and service).

pub trait Abi: ContractAbi + ServiceAbi {}Next, we're going to implement each of the two traits.

Contract ABI

The ContractAbi trait defines the data types that your application uses in a

contract. Each type represents a specific part of the contract's behavior:

/// A trait that includes all the types exported by a Linera application contract.

pub trait ContractAbi {

/// The type of operation executed by the application.

type Operation: Serialize + DeserializeOwned + Send + Sync + Debug + 'static;

/// The response type of an application call.

type Response: Serialize + DeserializeOwned + Send + Sync + Debug + 'static;

/// How the `Operation` is deserialized

fn deserialize_operation(operation: Vec<u8>) -> Result<Self::Operation, String> {

bcs::from_bytes(&operation)

.map_err(|e| format!("BCS deserialization error {e:?} for operation {operation:?}"))

}

/// How the `Operation` is serialized

fn serialize_operation(operation: &Self::Operation) -> Result<Vec<u8>, String> {

bcs::to_bytes(operation)

.map_err(|e| format!("BCS serialization error {e:?} for operation {operation:?}"))

}

/// How the `Response` is deserialized

fn deserialize_response(response: Vec<u8>) -> Result<Self::Response, String> {

bcs::from_bytes(&response)

.map_err(|e| format!("BCS deserialization error {e:?} for response {response:?}"))

}

/// How the `Response` is serialized

fn serialize_response(response: Self::Response) -> Result<Vec<u8>, String> {

bcs::to_bytes(&response)

.map_err(|e| format!("BCS serialization error {e:?} for response {response:?}"))

}

}All these types must implement the Serialize, DeserializeOwned, Send,

Sync, Debug traits, and have a 'static lifetime.

In our example, we would like to change our Operation to u64, like so:

Service ABI

The ServiceAbi is in principle very similar to the ContractAbi, just for the

service component of your application.

The ServiceAbi trait defines the types used by the service part of your

application:

/// A trait that includes all the types exported by a Linera application service.

pub trait ServiceAbi: ContractAbi {

/// The type of a query receivable by the application's service.

type Query: Serialize + DeserializeOwned + Send + Sync + Debug + 'static;

/// The response type of the application's service.

type QueryResponse: Serialize + DeserializeOwned + Send + Sync + Debug + 'static;

}For our Counter example, we'll be using GraphQL to query our application so

our ServiceAbi should reflect that:

use async_graphql::{Request, Response};

References

-

The full trait definition of

Abican be found here. -

The full

Counterexample application can be found here.

Writing the Contract Binary

The contract binary is the first component of a Linera application. It can actually change the state of the application.

To create a contract, we need to create a new type and implement the Contract

trait for it, which is as follows:

There's quite a bit going on here, so let's break it down and take one method at a time.

For this application, we'll be using the load, execute_operation and store

methods.

The contract lifecycle

To implement the application contract, we first create a type for the contract:

This type usually contains at least two fields: the persistent state defined

earlier and a handle to the runtime. The runtime provides access to information

about the current execution and also allows sending messages, among other

things. Other fields can be added, and they can be used to store volatile data

that only exists while the current transaction is being executed, and discarded

afterwards.

When a transaction is executed, the contract type is created through a call to

Contract::load method. This method receives a handle to the runtime that the

contract can use, and should use it to load the application state. For our

implementation, we will load the state and create the CounterContract

instance:

When the transaction finishes executing successfully, there's a final step where

all loaded application contracts are called in order to do any final checks and

persist its state to storage. That final step is a call to the Contract::store

method, which can be thought of as similar to executing a destructor. In our

implementation we will persist the state back to storage:

It's possible to do more than just saving the state, and the Contract finalization section provides more details on that.

Instantiating our Application

The first thing that happens when an application is created from a bytecode is

that it is instantiated. This is done by calling the contract's

Contract::instantiate method.

Contract::instantiate is only called once when the application is created and

only on the microchain that created the application.

Deployment on other microchains will use the Default value of all sub-views in

the state if the state uses the view paradigm.

For our example application, we'll want to initialize the state of the application to an arbitrary number that can be specified on application creation using its instantiation parameters:

Implementing the increment operation

Now that we have our counter's state and a way to initialize it to any value we would like, we need a way to increment our counter's value. Execution requests from block proposers or other applications are broadly called 'operations'.

To handle an operation, we need to implement the Contract::execute_operation

method. In the counter's case, the operation it will be receiving is a u64

which is used to increment the counter by that value:

Declaring the ABI

Finally, we link our Contract trait implementation with the ABI of the

application:

References

-

The full trait definition of

Contractcan be found here. -

The full

Counterexample application can be found here.

Writing the Service Binary

The service binary is the second component of a Linera application. It is compiled into a separate Bytecode from the contract and is run independently. It is not metered (meaning that querying an application's service does not consume gas), and can be thought of as a read-only view into your application.

Application states can be arbitrarily complex, and most of the time you don't want to expose this state in its entirety to those who would like to interact with your app. Instead, you might prefer to define a distinct set of queries that can be made against your application.

The Service trait is how you define the interface into your application. The

Service trait is defined as follows:

Let's implement Service for our counter application.

First, we create a new type for the service, similarly to the contract:

Just like with the CounterContract type, this type usually has two types: the

application state and the runtime. We can omit the fields if we don't use

them, so in this example we're omitting the runtime field, since its only used

when constructing the CounterService type.

As before, the macro service! generates the necessary boilerplate for

implementing the service

WIT interface,

exporting the necessary resource types and functions so that the service can be

executed.

Next, we need to implement the Service trait for CounterService type. The

first step is to define the Service's associated type, which is the global

parameters specified when the application is instantiated. In our case, the

global parameters aren't used, so we can just specify the unit type:

impl Service for CounterService {

type Parameters = ();

// ...

}Also like in contracts, we must implement a load constructor when implementing

the Service trait. The constructor receives the runtime handle and should use

it to load the application state:

Services don't have a store method because they are read-only and can't

persist any changes back to the storage.

The actual functionality of the service starts in the handle_query method. We

will accept GraphQL queries and handle them using the

async-graphql crate. To

forward the queries to custom GraphQL handlers we will implement in the next

section, we use the following code:

Finally, as before, the following code is needed to incorporate the ABI

definitions into your Service implementation:

Adding GraphQL compatibility

Finally, we want our application to have GraphQL compatibility. To achieve this

we need a QueryRoot to respond to queries and a MutationRoot for creating

serialized Operation values that can be placed in blocks.

In the QueryRoot, we only create a single value query that returns the

counter's value:

In the MutationRoot, we only create one increment method that returns a

serialized operation to increment the counter by the provided value:

We haven't included the imports in the above code. If you want the full source code and associated tests check out the examples section on GitHub.

References

-

The full trait definition of

Servicecan be found here. -

The full

Counterexample application can be found here.

Deploying the Application

The first step to deploy your application is to configure a wallet. This will determine where the application will be deployed: either to a local net or to the public deployment (i.e. a devnet or a testnet).

Local network

To configure the local network, follow the steps in the Getting Started section.

Afterwards, the LINERA_WALLET, LINERA_STORAGE, LINERA_KEYSTORE environment

variables should be set and can be used in the publish-and-create command to

deploy the application while also specifying:

- The location of the contract bytecode

- The location of the service bytecode

- The JSON encoded initialization arguments

linera publish-and-create \

target/wasm32-unknown-unknown/release/my_counter_{contract,service}.wasm \

--json-argument "42"

Devnets and Testnets

To configure the wallet for the current testnet while creating a new microchain, the following command can be used:

linera wallet init --faucet https://faucet.testnet-conway.linera.net

linera wallet request-chain --faucet https://faucet.testnet-conway.linera.net

The Faucet will provide the new chain with some tokens, which can then be used

to deploy the application with the publish-and-create command. It requires

specifying:

- The location of the contract bytecode

- The location of the service bytecode

- The JSON encoded initialization arguments

linera publish-and-create \

target/wasm32-unknown-unknown/release/my_counter_{contract,service}.wasm \

--json-argument "42"

Interacting with the application

To interact with the deployed application, a node service must be used.

Cross-Chain Messages

On Linera, applications are meant to be multi-chain: They are instantiated on every chain where they are used. An application has the same application ID and bytecode everywhere, but a separate state on every chain. To coordinate, the instances can send cross-chain messages to each other. A message sent by an application is always handled by the same application on the target chain: The handling code is guaranteed to be the same as the sending code, but the state may be different.

For your application, you can specify any serializable type as the Message

type in your Contract implementation. To send a message, use the

ContractRuntime

made available as an argument to the contract's

Contract::load

constructor. The runtime is usually stored inside the contract object, as we did

when writing the contract binary. We can then call

ContractRuntime::prepare_message

to start preparing a message, and then send_to to send it to a destination

chain.

self.runtime

.prepare_message(message_contents)

.send_to(destination_chain_id);After block execution in the sending chain, sent messages are placed in the

target chains' inboxes for processing. There is no guarantee that it will be

handled: For this to happen, an owner of the target chain needs to include it in

the incoming_messages in one of their blocks. When that happens, the

contract's execute_message method gets called on their chain.

While preparing the message to be sent, it is possible to enable authentication forwarding and/or tracking. Authentication forwarding means that the message is executed by the receiver with the same authenticated signer as the sender of the message, while tracking means that the message is sent back to the sender if the receiver rejects it. The example below enables both flags:

self.runtime

.prepare_message(message_contents)

.with_tracking()

.with_authentication()

.send_to(destination_chain_id);Example: fungible token

In the fungible example

application, such a message can be the transfer of

tokens from one chain to another. If the sender includes a Transfer operation

on their chain, it decreases their account balance and sends a Credit message

to the recipient's chain:

async fn execute_operation(&mut self, operation: Self::Operation) -> Self::Response {

match operation {

// ...

}

}On the recipient's chain, execute_message is called, which increases their

account balance.

async fn execute_message(&mut self, message: Message) {

match message {

// ...

}

}Calling other Applications

We have seen that cross-chain messages sent by an application on one chain are always handled by the same application on the target chain.

This section is about calling other applications using cross-application calls.

Such calls happen on the same chain and are made with the helper method

ContractRuntime::call_application:

The authenticated argument specifies whether the callee is allowed to perform

actions that require authentication either

- on behalf of the signer of the original block that caused this call, or

- on behalf of the calling application.

The application argument is the callee's application ID, and A is the

callee's ABI.

The call argument is the operation requested by the application call.

Example: crowd-funding

The crowd-funding example application allows the application creator to launch

a campaign with a funding target. That target can be an amount specified in any

type of token based on the fungible application. Others can then pledge tokens

of that type to the campaign, and if the target is not reached by the deadline,

they are refunded.

If Alice used the fungible example to create a Pugecoin application (with an

impressionable pug as its mascot), then Bob can create a crowd-funding

application, use Pugecoin's application ID as CrowdFundingAbi::Parameters, and

specify in CrowdFundingAbi::InstantiationArgument that his campaign will run

for one week and has a target of 1000 Pugecoins.

Now let's say Carol wants to pledge 10 Pugecoin tokens to Bob's campaign. She

can make her pledge by running the linera service and making a query to Bob's

application:

mutation { pledge(owner: "User:841…6c0", amount: "10") }

This will add a block to Carol's chain containing the pledge operation that gets

handled by CrowdFunding::execute_operation, resulting in one cross-application

call and two cross-chain messages:

First CrowdFunding::execute_operation calls the fungible application on

Carol's chain to transfer 10 tokens to Carol's account on Bob's chain:

// ...

let call = fungible::Operation::Transfer {

owner,

amount,

target_account,

};

// ...

self.runtime

.call_application(/* authenticated by owner */ true, fungible_id, &call);This causes Fungible::execute_operation to be run, which will create a

cross-chain message sending the amount 10 to the Pugecoin application instance

on Bob's chain.

After the cross-application call returns, CrowdFunding::execute_operation

continues to create another cross-chain message

crowd_funding::Message::PledgeWithAccount, which informs the crowd-funding

application on Bob's chain that the 10 tokens are meant for the campaign.

When Bob now adds a block to his chain that handles the two incoming messages,

first Fungible::execute_message gets executed, and then

CrowdFunding::execute_message. The latter makes another cross-application call

to transfer the 10 tokens from Carol's account to the crowd-funding

application's account (both on Bob's chain). That is successful because Carol

does now have 10 tokens on this chain and she authenticated the transfer

indirectly by signing her block. The crowd-funding application now makes a note

in its application state on Bob's chain that Carol has pledged 10 Pugecoin

tokens.

References

For the complete code, please take a look at the

crowd-funding and the

fungible application contracts in

the examples folder in linera-protocol.

The implementation of the Runtime made available to contracts is defined in this file.

Using Data Blobs

Some applications may want to use static assets, like images or other data: e.g.

the non-fungible example application implements NFTs, and each NFT has an

associated image.

Data blobs are pieces of binary data that, once published on any chain, can be used on all chains. What format they are in and what they are used for is determined by the application(s) that read(s) them.

You can use the linera publish-data-blob command to publish the contents of a

file, as an operation in a block on one of your chains. This will print the ID

of the new blob, including its hash. Alternatively, you can run linera service

and use the publishDataBlob GraphQL mutation.

Applications can now use runtime.read_data_blob(blob_hash) to read the blob.

This works on any chain, not only the one that published it. The first time your

client executes a block reading a blob, it will download the blob from the

validators if it doesn't already have it locally.

In the case of the NFT app, it is only the service, not the contract, that

actually uses the blob data to display it as an image in the frontend. But we

still want to make sure that the user has the image locally as soon as they

receive an NFT, even if they don't view it yet. This can be achieved by calling

runtime.assert_data_blob_exists(blob_hash) in the contract: It will make sure

the data is available, without actually loading it.

For the complete code please take a look at the non-fungible

contract and

service.

Printing Logs from an Application

Applications can use the log crate to print

log messages with different levels of importance. Log messages are useful during

development, but they may also be useful for end users. By default the

linera service command will log the messages from an application if they are

of the "info" importance level or higher (briefly, log::info!, log::warn!

and log::error!).

During development it is often useful to log messages of lower importance (such

as log::debug! and log::trace!). To enable them, the RUST_LOG environment

variable must be set before running linera service. The example below enables

trace level messages from applications and enables warning level messages from

other parts of the linera binary:

export RUST_LOG="warn,linera_execution::wasm=trace"

Writing Tests